Query Fan-Out SEO for AI Citations

Have you ever ranked a target term and still watched AI answers cite everyone except you? Query fan-out means one prompt can be split into multiple hidden retrieval questions before an answer is generated. This guide shows you how to rebuild your search engine optimization (SEO) page strategy. Then citation systems can find you across those sub-queries.

Key Takeaways

- Query fan-out means one prompt can split into many retrieval paths, so a single page rarely covers enough ground to win repeated citations.[1][3]

- AI systems often select from a broad candidate pool, which means your coverage across adjacent intents matters as much as rank for one phrase.[2][6]

- The practical fix is to map sub-queries first, then publish a tight internal cluster around those buckets instead of isolated keyword pages.

In plain English: stop polishing one keyword page and start building bucket coverage first. Imagine a solo consultant spending 2 hours every Thursday on one cluster: in 30 days, covering 4 intent buckets gives AI systems more citation-eligible paths.

Why Query Fan-Out Changes SEO Math

Google describes AI Mode as handling broader and follow-up information needs in one experience.[1] That changes the math because visibility is no longer one result page against one keyword. You need to show up in more AI follow-up questions.

Google Search Central emphasizes useful, people-first content for AI features rather than narrow exact-match optimization.[2] Put differently, your page should aim for inclusion across related intents if you want reliable mentions.

A solo consultant can feel this in a single week: Monday you publish for “query expansion,” and Wednesday prospects ask deeper questions. By Friday, AI answers can cite pages that cover those follow-ups while your main page sits unused in the final answer.

I think many teams waste months polishing one page title while retrieval systems test ten angles around the same need.

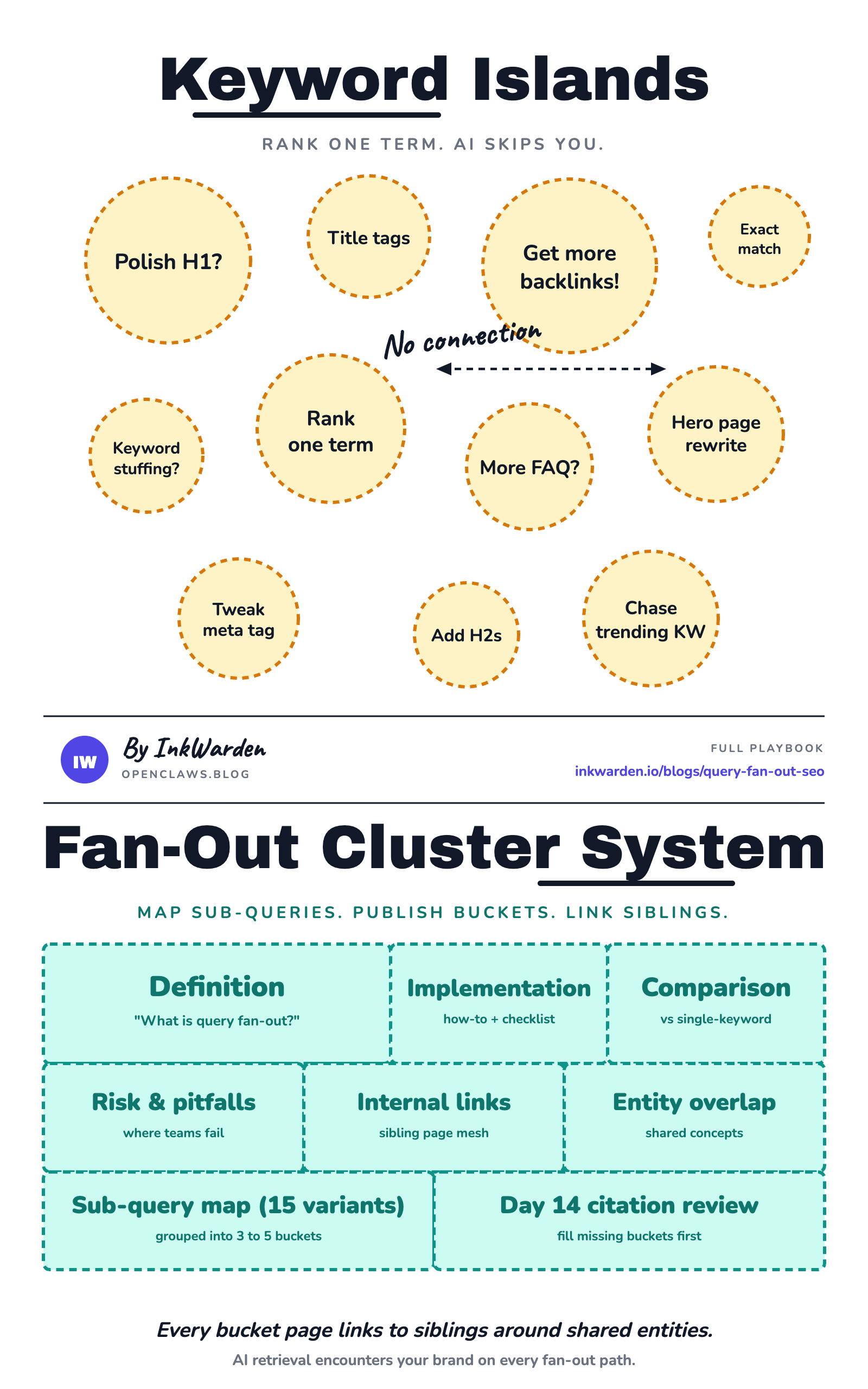

The One Pain-Point: You Rank, But AI Still Skips You

Hidden sub-queries create coverage gaps your main page never answers

Search Engine Land describes query fan-out as splitting one search into multiple sub-queries.[3] In practice, that often means your visible target keyword is only part of what gets retrieved. If your page only answers the surface term, you leave adjacent questions open for competitors.

In plain English: the system asks extra questions behind your back. A freelancer can rank for one phrase and still lose citation share if their posts do not answer the next-step prompts that appear during fan-out.

This is why I tell small teams to stop celebrating rank alone. Rank can still help, but it does not predict AI citations well once fan-out expands intent paths.

Entity and intent adjacency decide citation eligibility more than rank position

Search Engine Land's optimization guidance and Search Engine Journal's reporting on Robby Stein's comments both point in the same direction.[4][5] AI retrieval rewards coverage of related concepts and follow-up intents. If your page never addresses adjacent entities, AI can skip it in final citations.

Imagine a content lead tracking google query fan-out over 30 days. Their top page ranks on classic search results pages, yet AI answers repeatedly pull from smaller pages that define related entities, tradeoffs, and comparison terms.

The real problem is not weak writing. The real problem is pages that cover too little of the topic.

The One Solution: Build Fan-Out Coverage, Not Keyword Islands (Query Expansion)

Run a fan-out map first before drafting

Before writing anything, export one target prompt and collect at least 15 sub-query variants. You can group them by intent, entity, and decision stage. This is the core query fan-out technique most teams skip.

Semrush explains that fan-out systems can pull from multiple supporting pages, which is one reason mapping adjacent variants first can create a structural advantage.[6] Ahrefs makes a similar point when discussing hidden fan-out queries and adjacent intent coverage.[7]

A one-person agency can do this in 90 minutes on Tuesday morning: collect 15 prompts. Then sort into 4 buckets and assign one page angle to each bucket before writing a single paragraph.

I would skip drafting entirely until this map exists. Writing first and mapping later usually creates disconnected pages you have to rewrite.

Publish cluster architecture that supports multi query retrieval

Once you have buckets, publish a cluster where each page answers one bucket deeply and links to sibling pages around shared entities. This makes multi query retrieval more likely to encounter your brand repeatedly.

I think this matters more than endless title tweaks because structure changes what the model can retrieve, not just what humans click.

Worth knowing: this behavior is not a fringe edge case. If your architecture ignores fan-out behavior, your visibility plan is incomplete.

In the next section, you can see how this shift from keyword islands to bucket coverage changes likely citation outcomes in practice.

Comparison

Worth knowing: as of 2026, here is what changes when you move from isolated pages to fan-out clusters. I would not run a rank-only workflow anymore. A solo founder can test this over a 14-day cycle and usually spot where missing buckets block citations.

| Workflow | What You Publish | Fan-Out Coverage | Likely Citation Outcome |

|---|---|---|---|

| Single-keyword page | One article on head term only | Low | Occasional mention, inconsistent reuse |

| Keyword plus FAQ add-on | One main page with light extras | Medium-low | Better long-tail capture, still patchy |

| Fan-out cluster | 3 to 5 intent-bucket pages | High | More repeated inclusion across prompts |

| Fan-out cluster plus citation mesh | Cluster plus entity-based internal links | Very high | Best chance of recurring citation slots |

| Rank-only optimization | Title tags and backlinks only | Unclear | Can rank and still get skipped |

Ahrefs reports that AI Overviews (Google's AI-generated summary feature in Search) can reduce clicks.[8] So prioritize logs showing which sources the AI looked at over rank charts when you choose what to fix next.

Real-World Example

In a recent live training session, one practitioner demonstrated a retrieval-capture workflow while testing commercial-style prompts. They did not stop at the visible answer. They inspected retrieval traces and found that one question expanded into multiple parallel variants. Those variants included localized phrasing tied to user context.

Translation: AI pulled citations from more than one keyword page list. In one 60-minute session, the practitioner moved from isolated keyword pages to fan-out cluster planning.

That shift changed their publishing decisions immediately. Instead of writing one hero page, they mapped supporting pages for repeated sub-queries. Then they linked those pages through shared entities. I think this is the most practical proof of query fan-out seo: what you can retrieve repeatedly beats what you can rank once.

Getting Started

- Pick one prompt and expand it. Start here, not in your draft doc. Export one core query and collect at least 15 variants, including follow-up and comparison forms. A solo operator can finish this in one afternoon.

- Group variants into 3 to 5 buckets. Use intent buckets like definition, implementation, comparison, and risk. Keep one page target per bucket.

- Draft pages as a connected cluster. Each page should answer its bucket fully and link to sibling pages where entities overlap. This is where search query expansion becomes useful in execution, not just theory.

- Recheck citation patterns after 14 days. Review which buckets appear in AI answers and fill the missing ones first. Do not start a new keyword until the cluster is complete.

15 prompts

3 to 5 buckets

1 page per bucket

Day 14 citation check

Here's the thing: if you only have 5 hours a week, spend 3 on mapping and structure, then 2 on drafting. That query fan-out workflow split usually outperforms the opposite.

Running this manually each week is the bottleneck. See how Inkwarden ships a fully audited post every week →

FAQ

What is query fan out in plain terms?

It means an AI search system can take one user prompt and split it into several related retrieval queries before composing an answer. So your page is competing across a set of hidden sub-questions, not only one visible keyword.[3]

Is query fan-out SEO only for big teams?

No. Start with one topic, map 15 variants, publish 3 bucket pages, and connect them with internal links. Imagine a solo founder running this in a 2-hour Friday block for 4 weeks; that cadence is enough to build one complete fan-out cluster. A one-person business can run this process with a lightweight weekly routine.

How is this different from normal keyword research?

Classic research often picks one main term and a few secondary terms. Fan-out work starts from hidden sub-query behavior first, then designs content architecture to match retrieval paths. That is a different order of operations and it changes outcomes.

Do I still need to rank in classic search?

Yes, ranking still matters, but in AI answer environments it is not enough. Do not treat rank as the finish line. You need repeated eligibility across adjacent intents so your pages keep appearing in the set of pages the AI considers for citations.

How do I measure query fan-out citation performance?

Track one cluster for 14 days and log which bucket pages appear in AI answers for your test prompt set. If one bucket never appears, treat it as a coverage gap and expand that page before publishing a new cluster.

What query fan-out mistakes should I avoid?

The common failures are publishing before mapping variants, merging too many intents into one page, and skipping internal links between sibling pages. Keep one intent bucket per page and link related pages about closely related topics directly.

What is a lightweight stack for query fan-out mapping?

Use a simple sheet for prompts, one doc for bucket definitions, and your CMS outline before drafting. The tool choice matters less than keeping a repeatable mapping order every week.

How should local or ecommerce teams adapt query fan-out?

Keep the same method, but tune buckets to buying context. Local teams should include location modifiers and service comparisons, while ecommerce teams should include specs, alternatives, and price-adjacent intent buckets.

References

- Google AI Mode update: query fan-out technique

- Google Search Central: AI features and website guidance

- Search Engine Land guide: what query fan-out is

- Search Engine Land: how to optimize for query fan-out

- Search Engine Journal on Google's fan-out details

- Semrush explainer: query fan-out mechanics

- Ahrefs: hidden fan-out queries and adjacent intent

- Ahrefs: AI Overviews click impact update

Content marketer at InkWarden

Rachel writes about SEO, AEO, and Claude skill files for small teams and solo operators building durable organic growth.

View author profile →